In order to succeed in creating high fidelity, resiliency and efficiency, you'll want to:

- keep things simple,

- design for multi-AZ, and

- use Security Groups

Keep it Simple

There is a lot of complexity in typical LAN designs in on-premise data centers, and for good reason. With on-premise networks, subnets and address ranges become the focus for rules and filtering. This is not only unnecessary in most AWS VPC implementations, it is highly undesirable. Proper use of Security Groups (SGs) is correct pattern for isolation and access control, and allows you to maintain a very simple and resilient VPC design.

Before we get into SGs, we need to focus on planning our address use and subnets to be as resilient as possible and that means we want to predict as little as possible and keep our future optionality as open as possible. One option nearly everyone will want (and should do day one!) is to have high availability. On AWS, having HA means using Availability Zones (AZs), so you'll want to...

Design for Multi-AZ

For our VPC to be the most flexible and capable, we'll want to span at least two Availability Zones so that high availability designs can be created. Future needs are hard to predict, so we'll build for 2 AZ designs, but leave enough address space to add a third should some systems require it. One thing to keep in mind and explore prior to deciding on which AZs to use is that some EC2 Instance Types are only available in certain AZs (some of the more specialized ones like Cluster Compute and GPU), so make sure you're building in the AZs that are the best match. If you have requirements for large quantities of EC2 Instances now or in the future, it's a good idea to reach out to AWS Support to see which Region/AZs are going to be your best bet for available resources. This is usually not a major issue unless you want a significant portion of the instances of a given type.

The first thing we'll do is carve up our /16 into some usable subnets. I'm going to leave as much of the /16 unallocated until needed - remember to preserve your optionality! /24 subnets divide up the /16 evenly without waste, are a manageable size for most systems and are small enough to allow flexible provisioning over time. We'll start by adding a /24 public subnet to each of two AZs, and we'll use odd numbers in AZ A and even in AZ B. For the moment, don't worry about the details of how we'll separate different business systems inside these subnets, which will be shared. Sharing is very important as it allows us to use as much of the address space as possible. Isolation is possible, but very expensive and predictive and those are the enemies of resiliency. This produces:

In this design, we can cross AZs in a system to gain high availability, but we only have a single subnet per AZ and they are public. Clearly, this won't be adequate to our original design, so we'll add two more /24 subnets to allow for private network. Now we have:

Notice that you can immediately know which AZ an instance is in based on the IP address - odds in AZ A and evens in AZ B. The VPC described in Part 2 of this series could fit neatly into the simple VPC described above - all 632 hosts of it. So where the Part 2 VPC used 70% of the address space due to over usage of provisioned subnets and was stuck on a single AZ, we now have over 98% of our /16 left to use in other ways in the future! This pattern of odd/even numbering can be maintained through the life of the VPC since as the number of servers grow, we can simply extend the public, private or both to additional /24 subnets:

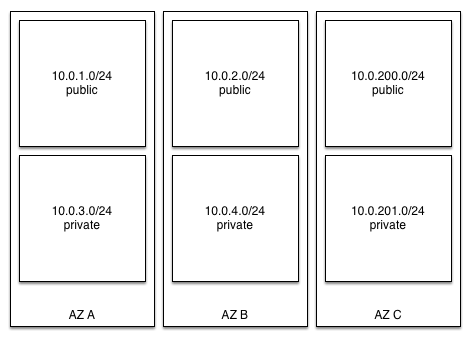

This pattern can keep growing as needed by the systems until the /16 is completely used, or you may want to reserve an IP address range for systems that cross a third AZ. One way to manage this is to set aside a block of addresses for AZ C - perhaps anything with the third octet above 200, which could produce:

Keeping your VPC as simple as possible requires shared use of subnets across systems in most cases. It's fine to add some additional subnets with different purposes, but these should generally be required due to routing rules, not isolation of instances. To get our isolation, we'll...

Use Security Groups

Security Groups are built into AWS as a platform and act as port/protocol firewalls. Security Groups are very powerful when used as intended and allow you to use new security patterns. Because they are enforced above the EC2 instance layer, your EC2 instances will never see traffic that is blocked. VPCs contain SGs, so it's important to have a solid VPC design prior to creating your SGs.

In our scenario, each system could have two SGs - one for the web server(s) and one for the database server(s). The rules for these SGs might look something like:

DennisBasSG:

allow port 22 from company IP range and modifying the existing SGs:

and modifying the existing SGs:

DennisWebSecurityGroup:

allow port 80 and 443 from anywhere allow port 22 from DennisBasSG DennisDbSecurityGroup :

allow port 3306 from DennisWebSecurityGroup allow port 22 from DennisBasSG Now, our web and database servers won't receive traffic on port 22 unless it originates from a member of the DennisBasSG. If you have a schedule for maintenance, you could also create a separate SG for allowing bastion connections and remove instances from it unless they are being maintained.

This design allows you to fully utilize your address space while maintaining security and fidelity in the runtime for the systems. Used effectively, VPC is extremely flexible and powerful. In fact, there are some new patterns for fidelity, resilience and efficiency much greater than what is now possible on traditional hardware models... stay tuned!