If your organization uses Amazon Web Services (AWS) for cloud computing, chances are that Amazon S3, or Amazon Simple Storage Service, gets a lot of use. The object storage service was one of the first cloud services offered by AWS (way back in 2006!), and it’s ease of use, reliability, and scalability have proven incredibly popular.

However, mismanaging the configuration of your S3 resources can lead to significant security and compliance incidents. High-profile data breaches resulting from S3 misconfiguration events continue to make headlines, and expect more to come. All it takes is a simple misconfigured S3 “bucket” access policy or encryption setting and sensitive data can wind up in the hands of bad actors.

Regardless of how the headline is written, In every one of these cases it was the cloud customer who was at fault, not AWS. AWS has an impeccable track record upholding their end of the Shared Responsibility Model for Cloud Security, but they can’t prevent you from shooting yourself in the foot with your S3 configuration and landing your organization the news.

Compliance Probably Governs Your Amazon S3 Usage

If your organization operates under a compliance regime such as HIPAA, PCI, SOC 2, GDPR, or NIST 800-53, know that they all include controls that govern the use and configuration of S3 (and many other AWS services your organization likely uses). If none of these compliance regimes apply to your organization or workloads, strongly consider adopting the AWS CIS Benchmark to help guide your safe use of AWS.

Maintain Access Control Over Your Amazon S3 Buckets

Many of the S3-related data breaches occur due to misconfigured access policies. When a new S3 bucket is created, the default access policy is set to “private,” but this can, and often does, get changed over the life of the resource. Cloud environments change over time as developers update applications and infrastructure and add new services over time, and access policies can be unintentionally weakened or disabled during these updates.

Conduct an audit of your S3 resources and their access policies to make they’re securely configured, and track any changes to these configurations using CloudTrail. For S3 buckets containing sensitive data, consider a product like Fugue, which detects (and can remediate) any “drift” from your established and secure configuration baseline without any additional coding or scripts.

The AWS CIS Benchmark provides a nice example of a compliance framework that governs S3 configuration and usage. CIS 2.6 requires bucket access logging to be enabled. 3.8 requires a log metric filter and alarm to be enabled for all bucket policy changes. Finally, you must ensure that your S3 buckets containing sensitive data are not open to the world. Instead, create an AWS IAM policy that only allows authorized users access to the bucket.

Use Encryption to Protect Your Amazon S3 Data

Maintaining secure access policies for your Amazon S3 resources is mandatory, but you also need to make sure you always have encryption enabled to prevent anyone with unauthorized access from reading the data. Again, you’ll want to audit your S3 resources to ensure encryption is enabled, and use logging to track any changes made to those resources, including instances where encryption is disabled.

Your compliance framework is likely going to apply here as well. Two examples include NIST 800-53 SC-13: Cryptographic Protection, which requires an organization to implement encryption where relevant, and SOC 2 CC 6.1, which requires encryption to supplement other measures used to protect data-at-rest.

So, You’re Responsible for AWS Security and Compliance!

While every organization is different, the responsibility for the security of critical data on AWS usually falls on a cloud security engineer, a DevOps engineer, a compliance analyst, or a cloud architect. Sometimes, some or all of the above share responsibility for cloud security, which means effective collaboration is a must (which is often referred to as “DevSecOps”).

No matter your role or title, if you’re responsible for the security and compliance of your AWS environment, a big part of that is ensuring that critical S3 resources are properly configured, and that they stay that way throughout the life of the resource.

Job #1: Certify that Your S3 Configurations Comply with Policy

If you haven’t already, you’ll need to certify that the configuration of your existing S3 resources in you AWS environment adheres to applicable compliance and security policies. This usually means an audit to ascertain the configuration of your S3 resources, whether manually using the AWS Console or some auditing tool.

You should perform audits of all your AWS environments regularly and often. For critical resources, you’ll want to continuously audit them using a tool that scans resources and validates their configuration against policy and reports on compliance violations and your overall security posture. Make sure you’re able to detect any “drift” from the original S3 configuration so you can determine if the change violates policy.

You’ll also need to work closely with your application and DevOps teams to certify that what they’re doing (i.e. deploying new environments; updating existing ones) complies with relevant policy. Because this is usually a time-consuming and error-prone manual process, your team should look for ways to “shift left” by baking policy checks earlier in your software development life cycle (SLDC), when making corrective changes is easier, faster, and less expensive.

Job #2: Identify and Remediate Amazon S3 Misconfiguration Events

OK, you’ve certified that your AWS S3 resources comply with policy and are configured securely. Awesome! Now comes the hard part: Making sure they stay that way.

Configuration drift for cloud infrastructure resources is a pervasive and often risky problem. S3 bucket configurations can be modified via the AWS Console and an Application Programming Interface (API), which may be leveraged using a variety of additional automation tooling. You’ll likely find that S3 configurations (along with those of a number of other AWS services) change frequently, sometimes taking them out of compliance and creating security vulnerabilities.

You need a tool that scans your AWS environment and alerts you on S3 configuration violations. You’ll want the ability to ignore alerts for S3 buckets that are intended to have public access (such as those hosting static websites) while flagging misconfiguration for those that should be private and encrypted. Alerts should provide enough information about the misconfiguration to ease manual remediation.

For critical S3 buckets that contain sensitive data, it’s mandatory that you graduate from manual processes and automatically remediate misconfiguration events when they occur. No manual process can bring your Mean Time To Remediation (MTTR) down to a safe level for critical misconfiguration events because the threats that seek to exploit cloud infrastructure vulnerabilities like misconfigured S3 buckets are themselves automated. And you’ll find over time automated remediation saves a lot of time and you’ll use it for more of your cloud resources.

Remediating AWS Misconfiguration Automatically

An efficient and comprehensive approach to automated remediation for AWS misconfiguration is using baselining to make your critical AWS infrastructure self-healing. With this approach, you establish a known-good infrastructure baseline that complies with policy. Once your baseline is established, detect and review any drift from the baseline. For critical resources, you should automatically revert drift events back to the established baseline. Baselining eliminates the need to predict what could go wrong and create blacklists, and baselines provide a mechanism to shift left on security and compliance.

Fugue provides for self-healing cloud infrastructure. Watch our Webinar to learn more.

Job #3: Report on AWS S3 Misconfiguration Events

In addition to requiring periodic compliance and security audit reports, most organizations require a report for every misconfiguration event that concerns a critical resource like Amazon S3. These reports typically require the following information:

- Which resource was affected (and in which environment)?

- What configuration changed?

- When did the misconfiguration occur?

- Who was responsible for the misconfiguration?

- What (if any) policy was violated with this misconfiguration?

- When was the misconfiguration detected?

- When was the misconfiguration remediated (and verified)?

- Who remediated the misconfiguration (or how was it remediated)?

- What is being done to ensure such misconfiguration events never happen again?

You’ll need to pull in a lot of log data to compile such a report, so automation can help here as well. As for the last requirement, automated remediation is the only way to prevent such misconfiguration events from reoccurring.

Consider measuring your mean time to remediation (MTTR) for misconfiguration of critical cloud resources to track the resilience of your cloud security efforts. An MTTR that’s measured in many hours or days should be considered unacceptably risky considering the automated threats that seek to exploit such misconfiguration.

One more thing…

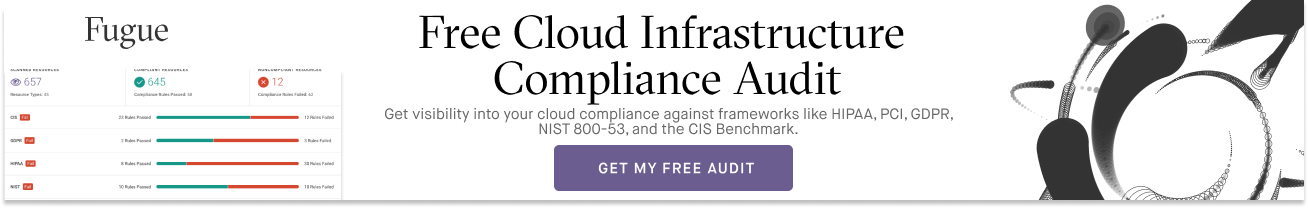

If you’re operating at scale in the cloud and care about the security and compliance of your cloud infrastructure environments, Fugue can help. With Fugue, you can:

- Validate compliance for your cloud environments against a number of policy frameworks like HIPAA, PCI, SOC 2, NIST 800-53, ISO 27001, and GDPR.

- Get complete visibility into your cloud environments and configurations with cloud infrastructure baselining and drift detection.

- Protect against cloud infrastructure misconfiguration, security incidents, and compliance violations with self-healing infrastructure.

- Shift Left on cloud infrastructure security and compliance with CI/CD integration to help your developers move fast and safely.

- Get continuous compliance visibility and reporting across your entire enterprise cloud footprint.

Schedule a free cloud security workshop or compliance audit to get a handle on your cloud security posture and learn how Fugue can help.