Enterprise cloud adoption is in full swing, therefore cloud security and compliance has become a top priority. Security in the cloud requires different approaches than in the datacenter—and a different mindset. Demonstrating this are movements like DevOps, DevSecOps, and Shift Left, which have begun to transform how Cloud Security Posture Management (CSPM) is done with automation using tools like infrastructure as code and policy as code.

These approaches (and the nature of cloud itself) show that we can be more secure in the cloud than we ever could in the datacenter. However, out of this transformation has emerged some dangerous assumptions that can lead to complacency and a false sense of security. I’ve identified a few “myths” that I’d like to dispel, as well as share some tips on how you can avoid getting burned by them.

Myth #1: Every change to production goes through the automated pipeline

If your team has adopted an automated CI/CD pipeline that integrates policy checks for security and compliance, then congratulations are in order! You’re ahead of the pack when it comes “Shift Left.”

But shifting left doesn’t mean you’ve removed the need to stay focused on the security of the “right side” of the software development life cycle (SDLC), specifically production environments. Why? Engineers are still going to make changes to production manually, perhaps by creating a new security group (i.e., firewall) rule to perform some maintenance and forgetting to close the door once they're finished. Many of our customers are initially surprised to discover just how much drift occurs in their cloud environments once they start detecting for drift.

Note: If bad actors gain access to your environment, they’re going to be keen on changing infrastructure configurations to steal data, do damage, and hide their tracks (e.g., turning off encryption, deleting tags, disabling logging). See Cloud Infrastructure Drift: The Good, The Bad, and The Ugly.

How to Avoid Getting Burned

By accepting that some change will always occur outside of your automated pipeline, you can focus on effectively managing this drift to keep your cloud secure.

- Understand the complete state of your production environments by baselining your infrastructure. With cloud APIs, it is now possible to discover everything you have running and all configuration attributes, something that was impossible in the datacenter.

- Continuously survey the configuration state of your infrastructure and compare it to the baseline you established. This will allow you to identify drift from your baseline to understand change as it happens and identify risky misconfigurations.

- Implement automated remediation for critical cloud resources. You likely have some security-critical resources (e.g., certain security groups and S3 buckets) where any configuration drift represents significant risk. Automatically remediate drift for these resources.

Rule of Thumb: Shift Left on cloud security is awesome, but Shift Whole is more awesome. Take a holistic approach to security and compliance throughout the entire SDLC.

Myth #2: Validating infrastructure as code files against policy is sufficient

One of the first things IT teams realize when adopting cloud is that doing everything in the cloud console doesn’t scale. Thanks to infrastructure as code (IaC) tools like Hashicorp Terraform and AWS CloudFormation, we can express the cloud resources we need in config files and build and update infrastructure in an efficient and scalable way.

It’s tempting then to validate our IaC files against policy to know if the cloud infrastructure we’re going to build will be secure and compliant. While doing so is recognition that shift left must include infrastructure security as well as application security, there are unfortunately some flaws with this approach that can lead to misconfiguration vulnerabilities.

Problem #1: Individual IaC files don’t tell the whole story

It’s rare to have a single infrastructure as code file that encompasses an entire cloud environment. Rather, you often break down your infrastructure environments into a number of smaller, more manageable IaC files and combine those in different ways to build infrastructure. You may have one file that defines IAM roles and policies, another for VPC networks, a third for EC2, and so on. It’s not possible to adequately validate a file defining IAM, for instance, without understanding the context for which these IAM policies will be used.

Problem #2: IaC files don’t include every configuration attribute

IaC files don’t include every configuration attribute for every cloud resource they define. For example, if you’re defining an AWS VPC in Terraform, you may only include the configuration details that you need for your application to work, such as which ports should not have ingress on a security group. Or you may include the configuration of a VPC that is referenced and not defined in the template, with AWS filling in the remaining details with default configurations. When looking at an IaC config file, you’re only seeing part of the picture you’ll create with it.

Problem #3: IaC languages are dynamic and must be resolved at runtime

IaC is typically done using data serialization languages or text formats, such as YAML or JSON, and aren’t ideal for automated validation. The same infrastructure can be expressed in many different ways using IaC languages, and they can be non-deterministic, producing different results on different runs. And because IaC languages are dynamic and make callouts at runtime, you need to resolve them in the runtime to truly know what you’ll have.

How to Avoid Getting Burned

Give developers tools to validate their dev environments against policy so they can get actionable feedback to fix problems early in the SDLC. Validate staging environments against policy and prohibit any deployment to production if it fails. It’s not a bad thing if developers validate their IaC files as they go—they’ll likely catch some things earlier and that can help them move faster. But before deploying to production, it’s imperative to validate your staging environment against policy.

Check out Regula, an open source tool for validating Terraform for policy compliance using Open Policy Agent, an open standard for policy-as-code.

Rule of Thumb: A running cloud environment is the only truth you can trust. If it’s on paper or in a config file, it’s not truth, so don’t trust it.

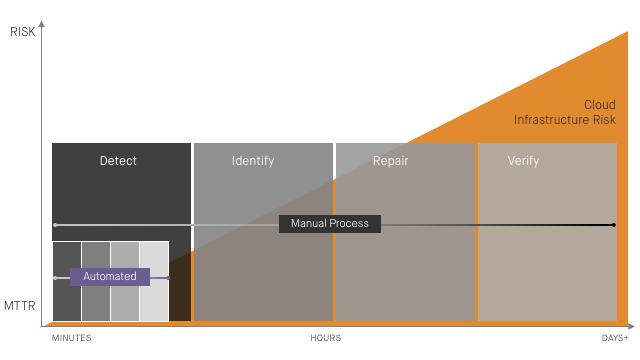

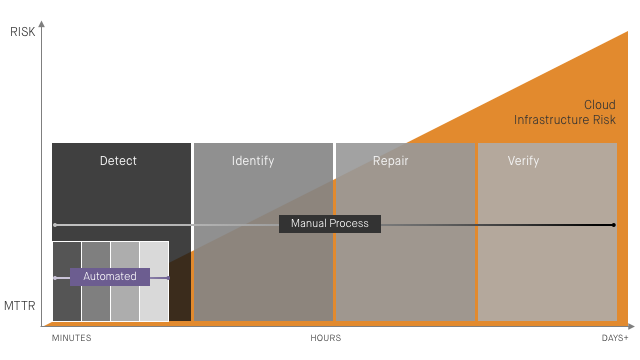

Myth #3: We can keep our cloud secure without automated remediation

There’s a long-standing aversion to security automation, and for good reason. Security teams prefer to identify incidents, isolate resources, and perform forensics, rather than use automation to fix them. Application teams distrust automated remediation because they’ve likely been burned by it in the past. Perhaps a false positive alert triggered automation that closed a good port or took out their DNS servers.

But enterprise cloud environments are too vast, complex, and dynamic to manage misconfiguration with manual processes and expect to keep sensitive data secure and environments in compliance (see 12 Ways Cloud Upended IT Security). Ask anyone managing the security of at-scale cloud environments how often they experience infrastructure drift. I guarantee the answer will be along the lines of “all of the time!”

If you’re relying on humans to review alerts and fix misconfiguration issues, you’re putting your organization at risk. Alert fatigue, human error, and a Mean Time to Remediation measured in hours or days all represent tempting opportunities for bad actors armed with their own automated exploits.

How to Avoid Getting Burned

We touched on this when discussing how to avoid getting burned by Myth #1, but we’ll dig in more here. Identify the security-critical cloud resources in your environment, validate that they adhere to policy, and put automated remediation in place for them. Recognize that cloud resource misconfiguration isn’t itself a security incident, but it can certainly lead to them.

To maintain business continuity and build trust between teams, automated remediation should correct misconfiguration back to the known-good baseline they originally provisioned. The configurations for security-critical cloud resources don’t typically need to change that often, so it should be easy to get everyone on the same page with automation here. Avoid bespoke automation scripts–often called “lambdas” or “bots"—as these can result in unintentionally destructive changes and a loss of trust with application teams.

Rule of Thumb: Always use automated remediation to protect security-critical cloud resources from misconfiguration vulnerabilities. A reliance on manual remediation introduces unnecessary risk.

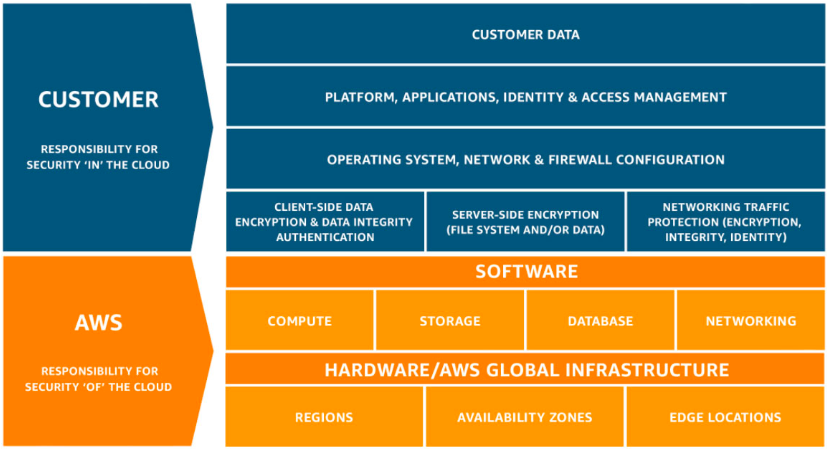

Myth #4: Our cloud provider is responsible for infrastructure security

This myth is an incredibly dangerous assumption to make. And with all of the headlines about “cloud breaches” in the news, it seems this old myth is still alive and kicking.

When moving to the cloud, it’s important to understand the Shared Responsibility Model. In short, the cloud service provider is responsible for “security of the cloud,” including the physical plant and hardware, and the cloud customer is responsible for “security in the cloud,” which includes how you configure and use your cloud services.

The following diagram is from Amazon Web Services (AWS), but the concept holds true whether you’re using cloud providers like AWS, Microsoft Azure, or Google Cloud Platform.

How to Avoid Getting Burned

Recognize that the responsibilities of cloud customers include not only the application and OS layers but also the configuration of cloud infrastructure resources. Apply a policy framework like the CIS AWS Foundations Benchmark to your cloud infrastructure and continuously monitor for drift and misconfiguration. If you’re new to cloud and want to understand how it’s different than the datacenter from a security and compliance perspective, check out our post on the 12 Ways Cloud Upended IT Security.

Rule of Thumb: Understand the Shared Responsibility Model of Cloud and pay attention to cloud resource configuration--and misconfiguration.

Myth 4b: We’re using serverless, so there’s no cloud infrastructure to secure

Serverless doesn’t have servers, right? Well, of course there are servers “under the hood,” but we don’t need to worry about them! We’re big fans of serverless here at Fugue and make significant use of AWS Lambda and Step Functions. It’s nice not having to worry about traditional server maintenance and security, but we all know that we need to make sure we’re using serverless functions in a secure and compliant way.

However, what’s dangerous is assuming that when using serverless, there’s no other cloud infrastructure to keep secure and compliant. This is the younger cousin to myth 4, and until I heard it a few times, I didn't think it was real.

How to Avoid Getting Burned

Recognize that, even if you’re making extensive use of serverless resources, you likely still have security-critical resources like networks, IAM roles, databases, messaging services, and storage. Make sure you’re including those in your cloud security posture management.

Rule of Thumb: Recognize that innovative new cloud services require new security responsibilities, and rarely do they relieve you of old ones.

Myth #5: Getting cloud security right will slow down or limit developers

Developers need to move fast to help organizations stay competitive, and security and compliance teams need to slow things down to ensure security policies are met, right? Well, that’s traditionally been the way things operate, and these competing incentives all too often pit teams against each other.

And in cloud, this friction has been amplified. Developers have direct access to on-demand cloud resources. And they’re going to take advantage of this, often without checking in with security and compliance teams, who then struggle to gain visibility and ensure policy adherence.

All too often, the answer is to turn the cloud into a datacenter. Policy checklists, manual approvals and audits, provisioning guardrails, and restricting cloud console access can provide some sense of control and security, but these measures strip away much of the advantages of adopting the cloud in the first place.

How to Avoid Getting Burned

The cloud gives us the means to end the tension between application teams and security/compliance teams. Leverage cloud APIs to gain continuous visibility into your environments and compliance posture. Help developers Shift Left on security and compliance with policy as code automation tools to quickly identify and fix issues early in the software development cycle. In the cloud, security and compliance professionals can become heroes by helping application teams move faster and safer than was ever possible in the datacenter.

Rule of Thumb: Empower developers with the automation tools to check their own work against policy before they deploy to production.

One More Thing…

If you’re operating at scale in the cloud and care about the security and compliance of your cloud infrastructure environments, Fugue can help. With Fugue, you can:

- Validate your cloud environments against a number of compliance policy frameworks like HIPAA, PCI, SOC 2, NIST 800-53, ISO 27001, and GDPR.

- Baseline your cloud environments to get complete and accurate visibility into your cloud infrastructure and configurations.

- Detect baseline drift and make critical resources self-healing to protect against misconfiguration, security incidents, and compliance violations.

- Shift Left on cloud infrastructure security and compliance with CI/CD integration to help your developers move fast and safely.

- Implement continuous compliance visibility and reporting across your entire enterprise cloud footprint.

Schedule a free cloud security workshop or compliance audit to get a handle on your cloud security posture and learn how Fugue can help.